SQL to dbt guide: Macros & Code Reusability

DRY principles done right. When to use macros for consistency and when plain SQL is clearer.

Hi there! Alejandro here 😊

Suscribe if you like to read about technical data & AI learnings, deep dives!

Enjoy the reading and let me know in the comments what you think about it 👨🏻💻

📝 TL;DR

Macros encode business logic that should behave identically across models. They’re not a tool for eliminating every repeated line

Before creating a macro, ask: does it repeat 3+ times, is the pattern identical except for parameters, and does consistency matter?

Over-abstraction is worse than repetition. If a macro makes the code harder to read, it shouldn’t exist

If you want to get more context on the dbt project use for these articles:

Most data teams reach for SCD2 patterns without

Everyone talks about DRY in programming, but few explain when you’ve gone too far.

dbt macros can make your code cleaner and more maintainable, or turn it into an unreadable mess of Jinja. The difference is knowing when repetition actually justifies the complexity.

The sql-to-dbt-series repo has both versions, raw SQL and macro-powered, so you can see exactly what macros buy you.

The Before and After

The repo keeps int_first_touchpoints.sql as a raw SQL view alongside `eph_first_touchpoints.sql`, which does the same thing with a macro. Side by side:

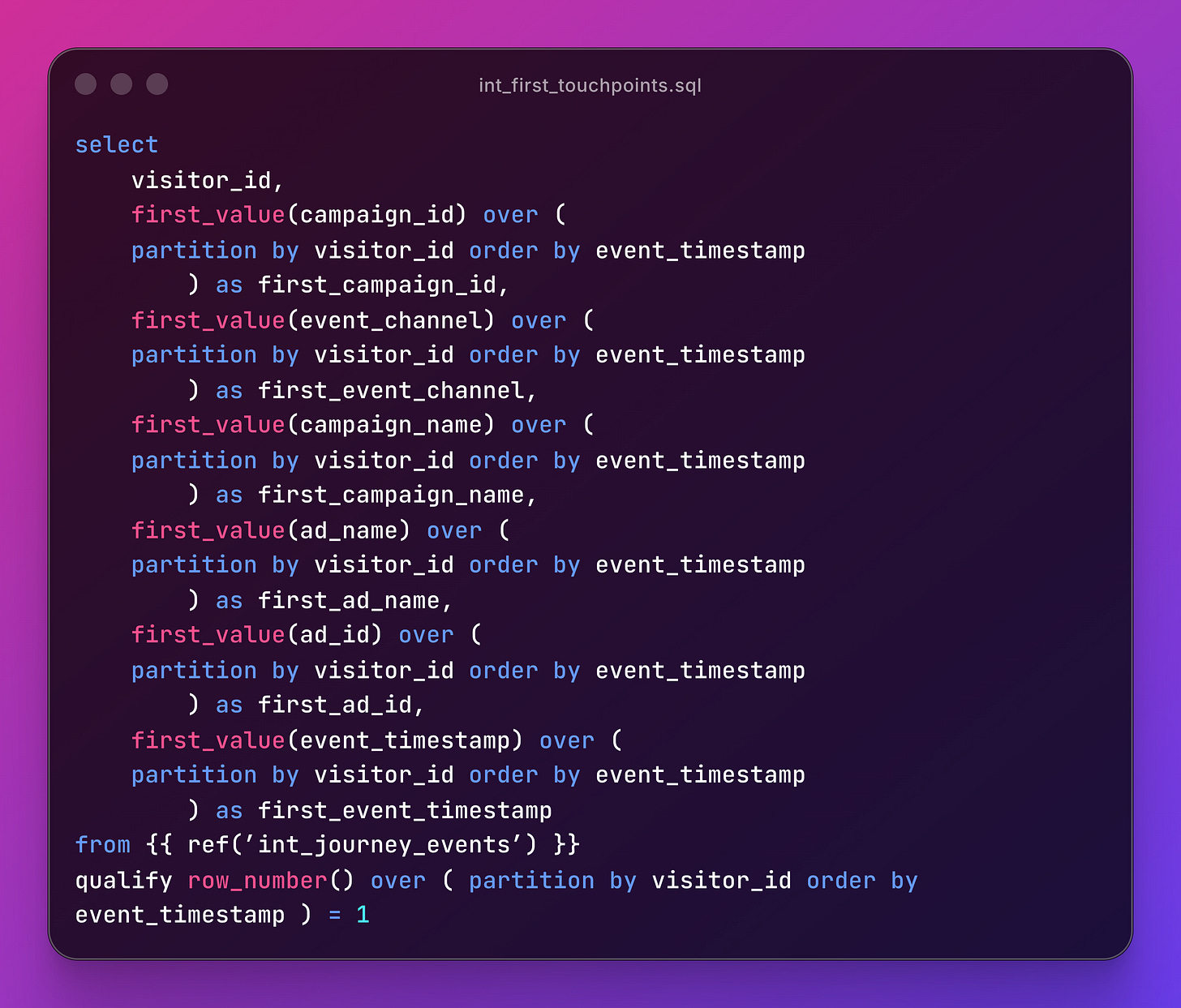

Raw SQL - int_first_touchpoints.sql:

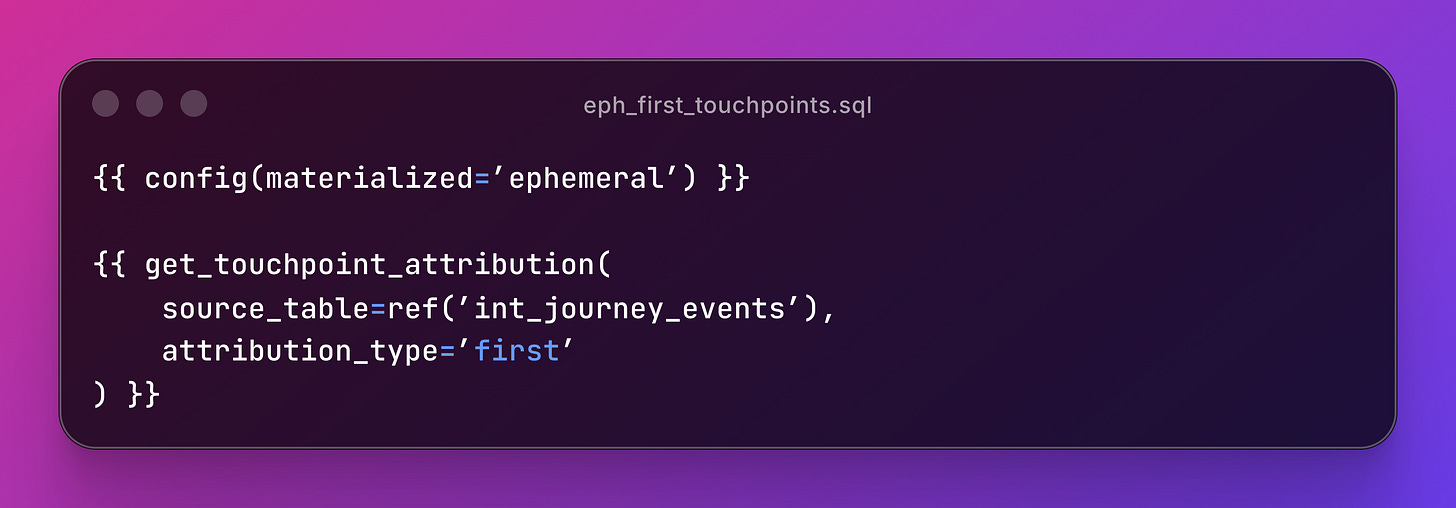

Macro version (`eph_first_touchpoints.sql`):

Same output. Six repeated `first_value(...)…` calls reduced to a single acro invocation.

And when you need last-touch attribution, you change attribution_type=’last`.

No copy-pasting window functions and flipping sort directions That’s when a macro earns its place.

The Macros That Earned Their Place

The project has five macros across two files. Four utility macros live in `classify_performance_tier.sql`, and the attribution macro stands alone.

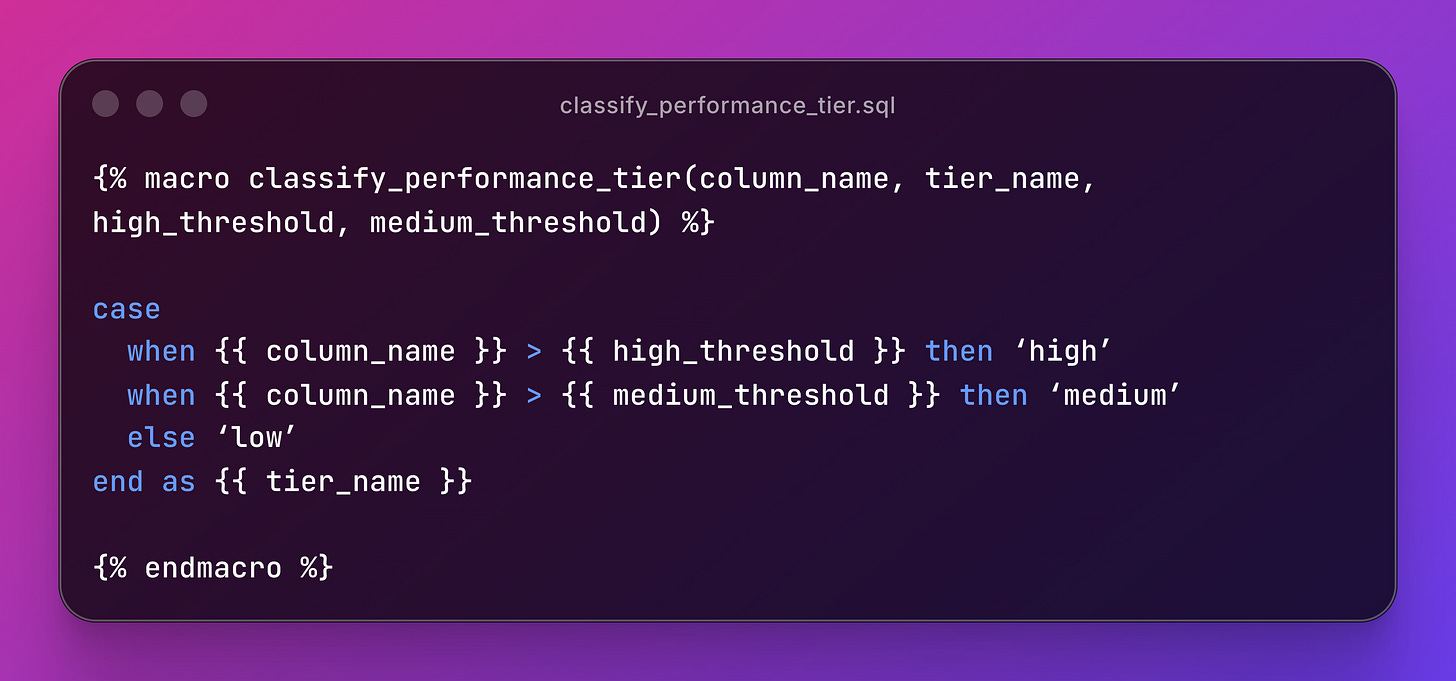

classify_performance_tier - Standardized tier classification:

Four parameters, four lines of SQL. When marketing decides “high CTR” now means 3% instead of 2%, you update one threshold. That’s the whole point.

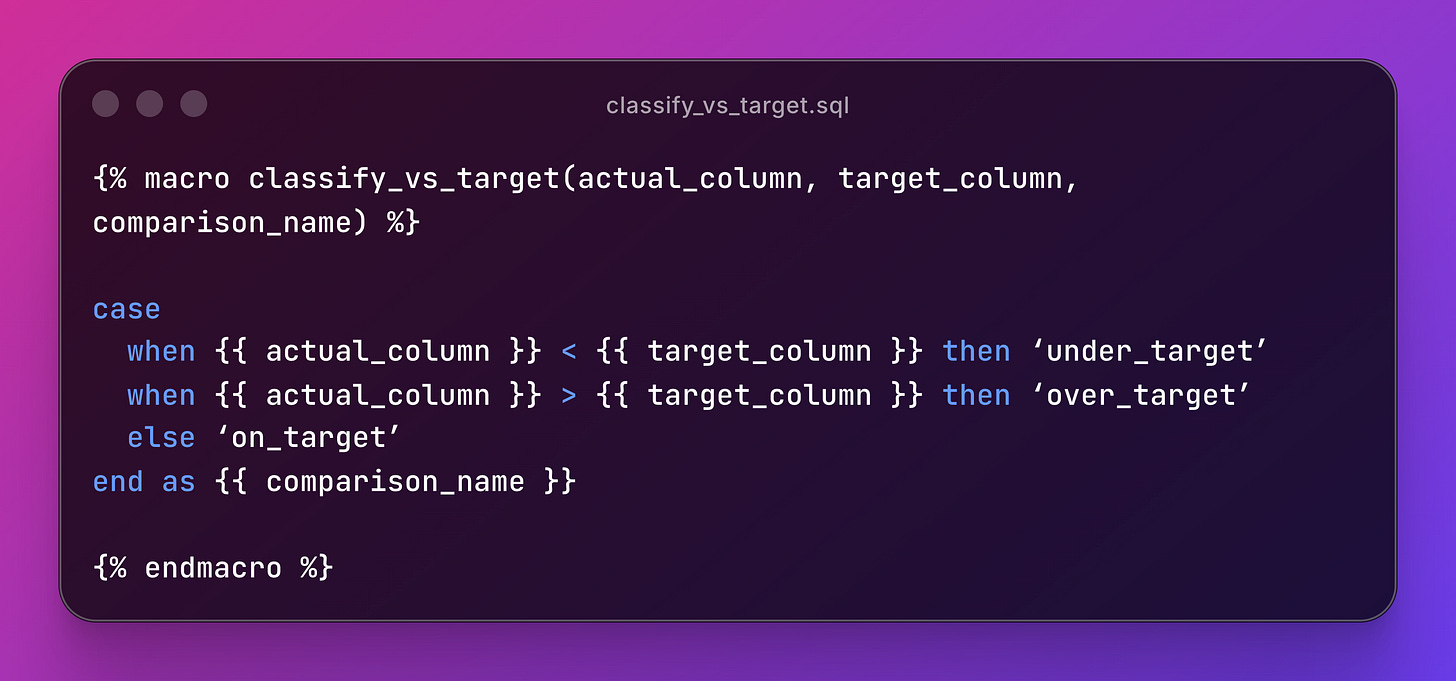

classify_vs_target - Actual vs target comparison:

The projects has 4 macros but for simplicity we kept it lean. Here’s all four working together in int_campaign_ads.sql:

Four macros, four metric patterns, one model. The compiled SQL is just CASE statements. No magic.

The last boss: get_touchpoint_attribution.sql.

Named window w as (...) keeps the SQL clean instead of repeating the partition clause five times. The additional_partitions parameter handles last-touch attribution, where you also need to partition by conversion_time.

When NOT to Create Macros

Not every CASE statement deserves a macro. Before wrapping SQL in Jinja, ask four questions:

Does this logic appear in 3+ places? If not, inline SQL is clearer.

Is the pattern identical except for parameters? If the logic varies between uses, a macro adds confusion.

Does this encode business logic that should be consistent? Tier definitions, division safety. These should behave the same everywhere.

Will someone else understand this in 6 months? If the macro requires more documentation than the SQL it replaces, reconsider.

The over-engineering trap:

-- Don’t do this

{% macro add_current_timestamp() %}

current_timestamp as date_transformed

{% endmacro %}

-- Just write this

current_timestamp as date_transformedA macro that saves zero cognitive load and adds a layer of indirection. The test is simple: if you’d spend more time finding the macro definition than reading the SQL, keep the SQL.

If you enjoyed the content, hit the like ❤️ button, share, comment, repost, and all those nice things people do when like stuff these days. Glad to know you made it to this part!

Hi, I am Alejandro Aboy. I am currently working as a Data Engineer. I started in digital marketing at 19. I gained experience in website tracking, advertising, and analytics. I also founded my agency. In 2021, I found my passion for data engineering. So, I shifted my career focus, despite lacking a CS degree. I’m now pursuing this path, leveraging my diverse experience and willingness to learn.